Infrastructure for Multilingual Text Analysis

2022-01-27

This presentation is from an online event on January 27th 2022. Digital Approaches to Multilingual Text Analysis delivered by Simon Musgrave and Peter Sefton.

About this event

The use of DH tools and methods have been applied across a variety of corpora but text-analysis of English language sources has dominated this field. These approaches are increasingly being used in languages and linguistics research for non-English corpora. At the same time, the integration of these tools has seen new research questions and possibilities emerge, including questions such as “Is there a non-Anglo digital humanities (DH), and if so, what are its characteristics” (Fiormonte 2016: 438). Recent studies have begun to examine aspects such as OCR for historical text analysis and data mining (Hill & Hengchen 2019; Goodman et al. 2018), multilingual computation analysis (Dombrowski 2020), semantic and sentiment analysis (Daems et al. 2019) and historical linguistics (Evans 2016), among others. The papers in this conference present a diverse range of projects and critiques of digital methods across different languages.

January 27th 1:45pm – 7:30pm AEDT

Convener: Joshua Brown Senior Lecturer and Convenor, Italian Studies, Australian National University and Katrina Grant Senior Lecturer, Centre for Digital Humanities Research, Australian National University

If we accept that making sharing and reuse of data (consistent with ethical considerations) should be the default, managing even small amounts of data can be onerous. Having infrastructures which can take on the task relieves researchers of some of this burden and brings advantages: more reliable FAIR compliance, access to data management experts, more responsive to changing technology (at least for the life of the infrastructure)

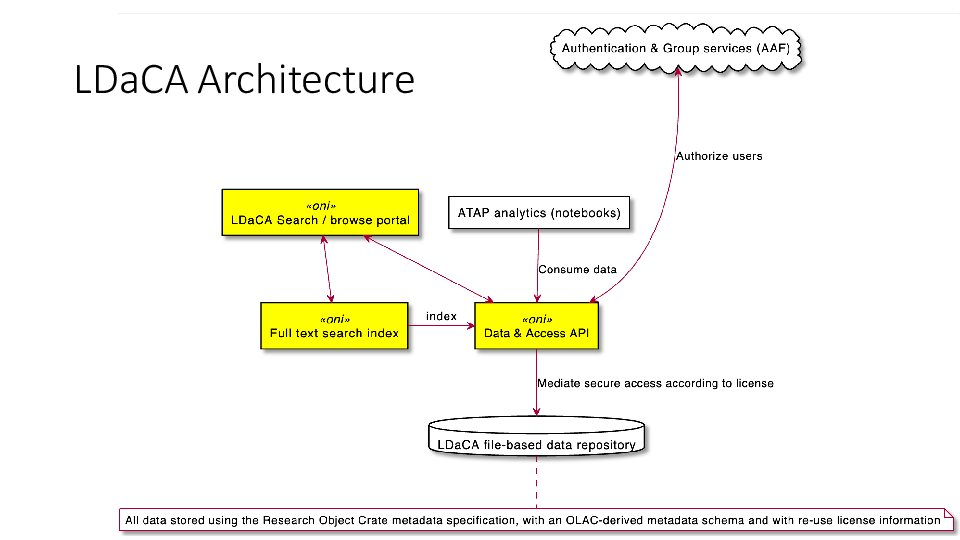

Regardless of where data is housed, access will be through one portal (external data may also be accessible by other routes). Access control will follow the CARE Principles for Indigenous Data Governance where original providers of data have moral rights which must be considered, data owners/custodians will control lists of authorised users.

'record language use in Australia' covers a huge range of possibilities. Current figures on slide, plus at least 250 Australian languages pre-European arrival, at least half no longer spoken but records remain (not for all). Unicode is not always without problems – how are we doing in meeting these goals so far? First, the architecture....

The LDaCA technical architecture is based on the Arkisto platform, storing data in the Oxford Common File Layout (OCFL), with data objects such as linguistic items and collections described in detail using Research Object Crate (RO-Crate). RO-Crate is a linked-data approach to describing data which is based on widely used standards for structural and descriptive properties such as dates and contributors, with extensions for language data being built on work in the Open Language Archives (OLAC). RO-Crate is an international collaboration with diverse contributors, the specification is in English and most RO-Crates at this point have English metadata and contents, but there is demand for content in other languages and future versions of the spec will cover multilingual use cases.

Simon pointed out here that the languages all use different writing systems, 3 completely distinct systems (Turkish and Vietnamese use extended Roman scripts, Farsi uses an Arabic based script)

This quick demonstration screencast shows a work-in-progress prototype of the LDaCA portal which will give controlled access to language resources to those who are licensed to see them – in this demonstrator we have openly available multilingual Australian Government documents in PDF and text format and a small history dataset containing interviews with women from Western Sydney, Farms to Freeways. Eventually the LDaCA repository will contain a wide variety of data including speech, video, sign, images and digitized text with a browse and search interface to allow researchers to find data they are interested in – provided, of course that they have been granted an appropriate licence to view and use the data. In this demonstration our colleague Moises Sacal Bonequi peforms searches in different languages to find repository objects of interest. Each object has multiple translations stored in separate files, in both PDF and text format.

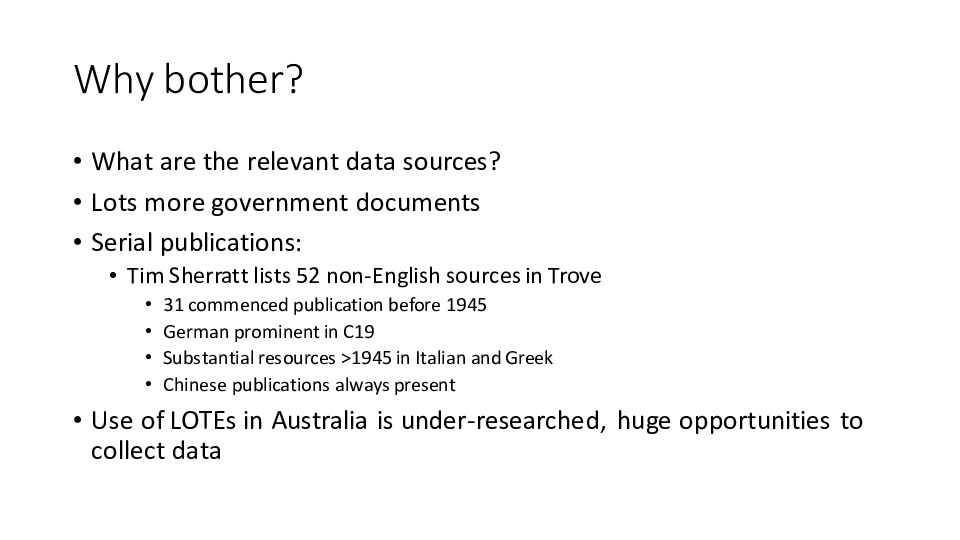

Is there data in Australia which makes it worth worrying about this? Yes – at least two important sources of written material, plus this is an under-researched field with lots of questions to be answered and therefore lots of data to be collected. For example, there is research on differing usage in Vietnamese depending on speakers' time of arrival in Australia (1970s v. later), yet to be replicated with other similarly time-layered communities.