Alf (Andrew) Leahy and I were recently in Melbourne for the NeCTAR Über Dojo event.

By coming to this two day event you will be able to go back to your institution with a signed certificate showing that you've been 'black belted' as a Cloud expert, specifically we'll train and qualify you in using the following tools and data in the Cloud:

How to use image management tools for production level VM management on the Cloud, i.e. Puppet or Chef

How to use libraries to access storage data APIs, e.g. SWIFT/S3, NFS, Object Store, etc.

We'll also use this event to get you the experts to tell us what new features we need for all these new toys (I mean infrastructure ;-)

The climax of this thing was an Iron Chef-style challenge. This is an initial rough post about our two hour hack (which we cleaned up over a few more hours post-event). Andrew will write up the event for the UWS eResearch blog. Thanks to Remko Duursma and Craig Barton for letting use their code and data for the demo.

Background: HIE* researchers use R

*Hawkesbury Institute for the Environment

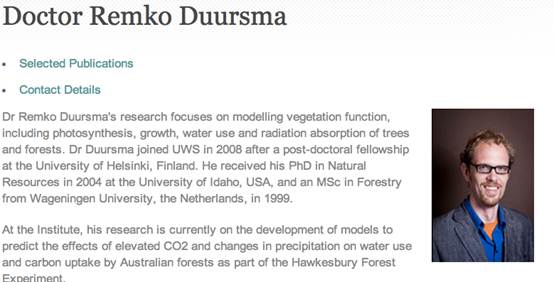

Meet Remko

http://www.uws.edu.au/hie/people/researchers/doctor_remko_duursma

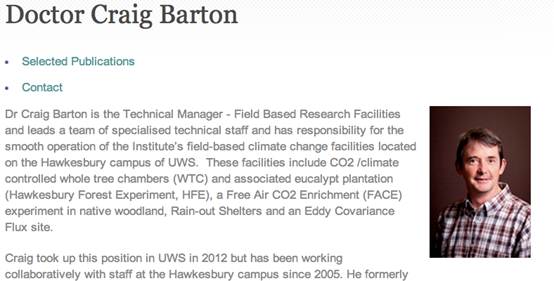

And Craig

http://www.uws.edu.au/hie/people/admin_and_technical_staff/dr_craig_barton

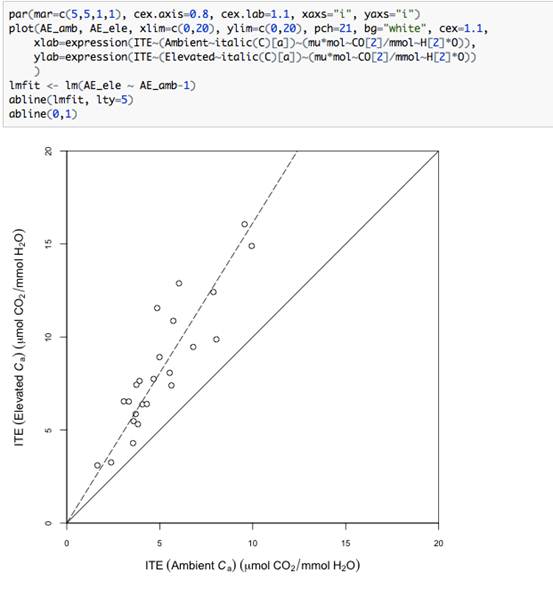

They run R to clean data & model stuff…

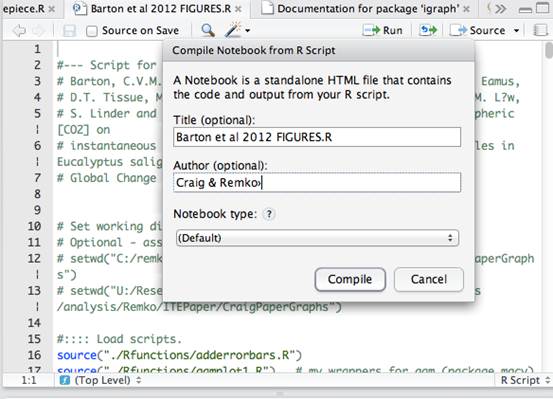

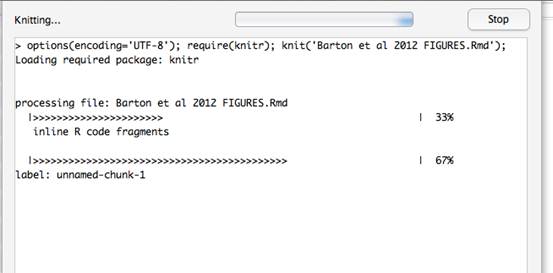

This R Notebook shows code and output together in HTML

Andrew (Alf) and I are at a workshop in Melbourne - I was wondering if I can use this as a demo tomorrow - ie it will appear on screen but not be made available over the net. The short notice is because the idea has only emerged today.

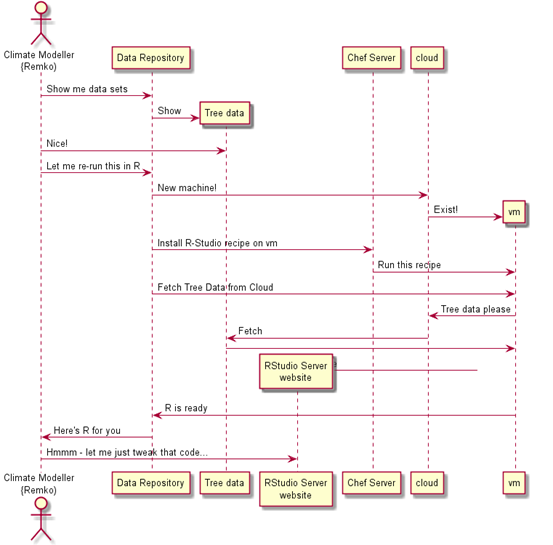

The thing we'd be demoing would be to _simulate_ the following:

Data set + scripts like the attached is sitting in a web based repository.

Repository offers user the opportunity to download the package OR - Get an interactive R-Studio shell where they can re-run the data

Use clicks a "see with interactive shell" link.

Our server:

Fires up a virtual server in the cloud

Installs the server version of R-Studio

Creates a user account

Pushes the data package onto the new server

Unpacks the package

Sets up R-studio to run Knitr on the main script to create HTML

sends a link to the R-Studio to the user (maybe by email cos this might take a few minutes)

The user can see the plots etc, and will have access to the R environment to tweak things

Our idea…

Remko’s reponse

That sounds fantastic. Please go ahead! Wait actually those are Craig's data :)**

>* Get an interactive R-Studio shell where they can re-run the data

YES that is perfect!

greetings

remko

**Craig gave us the go-ahead as well

Initial quick and dirty demo approach

We have written a simple CGI web script in Python which simulates a repository, with the capacity to orchestrate the below:

-

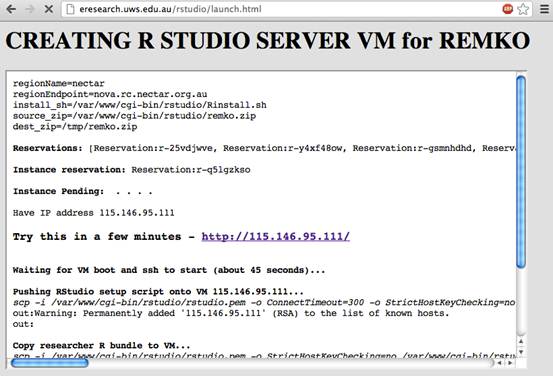

Create a new NeCTAR machine using Python with the Boto library

-

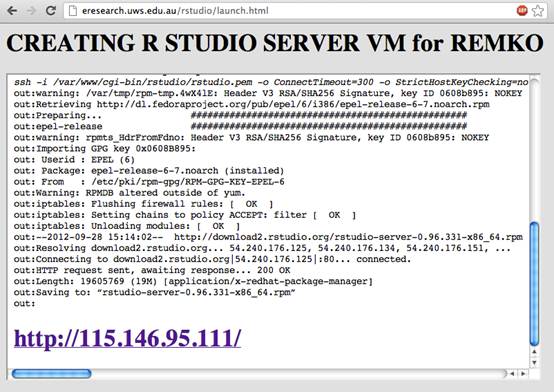

Connect via SSH and install R-Studio and dependencies, and make a user account

TODO: Use Chef for the initial server build – we learned about Chef on Monday but not enough to be able to create a new ‘recipe’ in an hour or so.

TODO: Use a snapshot image so users don’t have to wait ten minutes for a machine to start up and install all the prerequisites

-

Copy the sample data set onto the VM

TODO: Use the cloud object storage so the data set is near to the VM (We know how, just didn’t have time)

TODO: See if we can get R-Studio to launch with an R Notebook by default and log-in automatically

Why is this cool?

-

Our researcher-colleagues like the idea

-

It uses the cloud to solve a set of real problems*:

-

Makes it easy for ‘data shoppers’ to evaluate data sets

-

Potentially enables research outputs to be re-run in an exact environment for real reproducible workflows (Remko notes that you really need the exact R library versions sometimes, this could be an option.)

-

*Assuming a real implementation

Screenshot : Script creating a virtual server

Screenshot: our script Installs R-Studio*

*This process is really too slow to do on-demand, we should launch from a pre-built snapshot. And the automation here is really hacky and crude – it would be better to do it using something like Chef but that’s not a one-day project if you have never used it before.

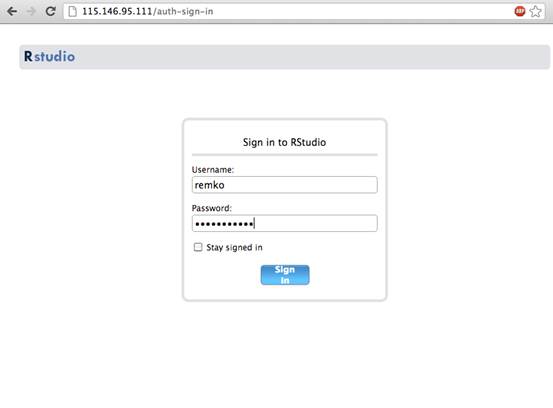

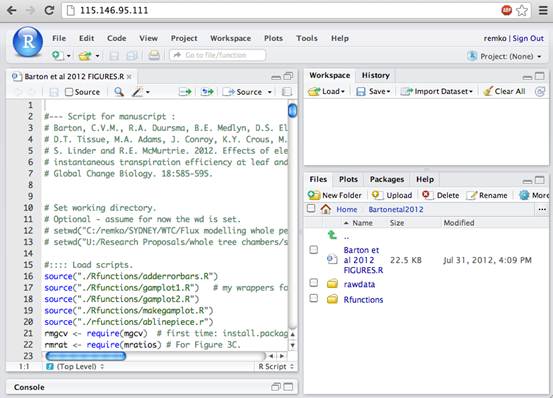

Result – an RStudio Server…

TODO: Automate the login by passing some kind of token?

With a data-set pre-loaded*

*We have not automated the installation of Knitr – so no R Notebook yet, but the code is runnable, and will spit out plot-images

Show me the code

The code we produced is demo quality hack-day code. This is not something you’d use in real life but we’re releasing it on Google Code as an example and so we can remember where to start when we come back to this and try to do it properly, maybe as part of the data capture project at the Hawkesbury Institute for the Environment.

Copyright Peter Sefton and Andrew Leahy, 2012. Licensed under Creative Commons Attribution-Share Alike 2.5 Australia. <http://creativecommons.org/licenses/by-sa/2.5/au/>